On one side, the Leica M-Monochrom, developed to take only black-and-white pictures. This camera removes the bayer device completely.

On the other side, the FujiFilm X-Pro1 substitutes the Bayer filter by "a different one", "randomly" laid...

In both cases, cameras offer higher definition by means of the elimination of the low-pass filter... But what does this mean to simple mortals like us? Let's go step by step...

The human eye

Human vision is more sensitive to green colour, then red, and just a bit to blue light. This is the main difference with electronic sensors.

In order to have "real-looking" pictures, digital cameras enhance green colour, then red and, maybe, reduce blue light intensity.

Digital sensors...

Usual values that digital cameras play with (also for the conversion to black and white!) are as follows:

- Green colour: 59%

- Red colour: 30%

- Blue colour: 11%

In order to facilitate electronics design, a colour grid is applied over the sensor, that will allow for twice the light input for the green channel, so that added values are in the same ranges (at least for red and green).

In the real world, this grid is made with 2x2 cells with the following structure (called Bayer filter):

All four read values (two green, one red, one blue) are processed to give a single pixel output-values, adjusting the input intensities as per the above percentages.

Problem #1: Sensor reading

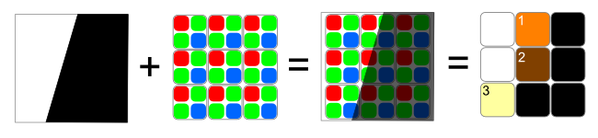

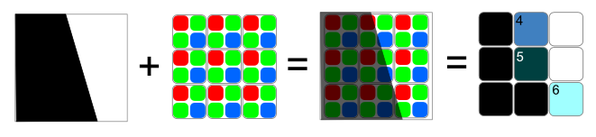

First problem appears when the subjects in the picture have strong lines or contrasted contours, which can produce false reading at the sensor. Let's check two examples:

Border line 1: In average, there are more reading for red input than from blue light. In this case, warm tones are (falsely) produced, and we will see orange (1), brown (2) or yellow (3) colours.

Border line 2: We have more blue information than red one. In this case, "ghost" contours will have cold colours, in the shades of blue and magenta (4, 5 and 6).

Final result of our object could be (simplified!) as follows:

Problem #2: Colour

Of course, real objects will not be just black and white. An object with any other colour will have a different behaviour through the Bayer grid.

Furthermore, if distortion (deviation) effects of lenses (which alreasy ay produce ghost contours by themselves) are taken into account, the issue with wrong colours at sharp contours increases...

And the solutions...

Basic way to reduce (not to fully eliminate...) this effect is the introduction of a further filter, a low-pass one.

This filter will average the calculated colour with the surrounding points, so that output will be more uniform in colour. But then, local contrast will be reduced. This is the main drawback of Bayer filters.

Leica M-Monochrom

Of course, if Bayer filter is completely removed (as in the Leica camera), Problem #1 (colour estimation) disappears. Sensor reading is limited to light intensity, so colour correction is also not needed.

Reading is closer to the "Luminance" value in the Lab system. And, as the low-pass filter is not needed anymore, local contrast is visibly improved...

FujiFilm X-Pro1

An intermediate step, probably not so radical, is to use a colour filter that is not so simple as the Bayer one. This will still allow for colour shooting. Main condition (to keep firmware relatively unchanged) is to keep the proportion of green colour double as the red one. Current Fuji proposal uses a 6x6 cells base matrix as follows:

Now, clean contours may not produce, statistically, a strong halo effect, and the result is optically better...

Originally posted on Jul 12th 2012 (Over-Blog)

No hay comentarios:

Publicar un comentario